RECOMMENDED NEWS

Apple loses AI whiz to Meta with an offer that will make your eyes water

It was just last month that OpenAI boss Sam Altman claimed that Meta had been trying to poach his to...

Read More →

Apple could finally fix Siri on iPhones with help from Google’s Gemini

“Find me a decent coffee shop where I can sit and get work done?” I uttered into my iPhone’s m...

Read More →

The success of WWDC 2025 hangs on Apple Intelligence. This is what it needs to

Apple WWDC This story is part of our complete Apple WWDC covera...

Read More →

ChatGPT can now remember more details from your past conversations

OpenAI has just announced that ChatGPT received a major upgrade to its memory features. The chatbot ...

Read More →

ChatGPT’s Advanced Voice Mode now has a ‘better personality’

If you find that ChatGPT’s Advanced Voice Mode is a little too keen to jump in when you’re engag...

Read More →

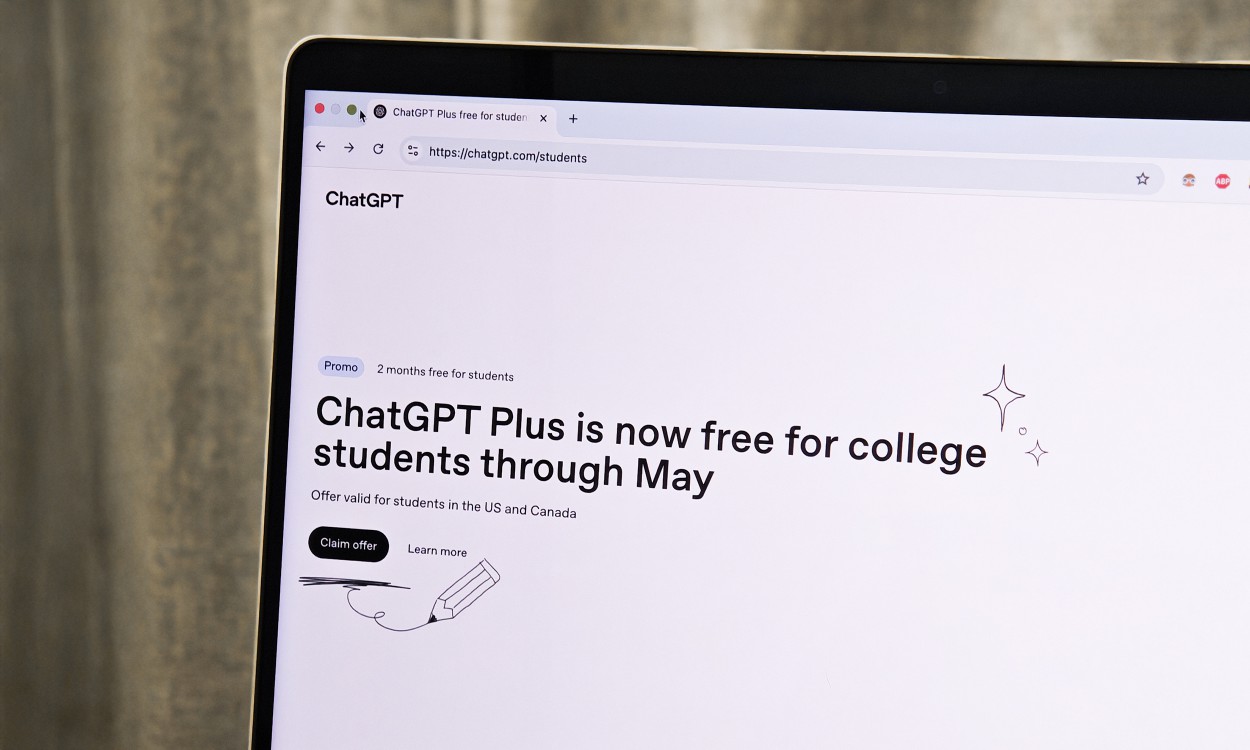

ChatGPT Plus is free for a limited time: Here’s how to check if you qualify

ChatGPT didn’t just emerge onto the AI scene, it birthed an entire revolution of AI assistants and...

Read More →

Ray-Ban Meta AI glasses go high fashion with Coperni limited edition

Meta delivered an unexpected runaway success with its Ray-Ban Stories smart glasses, and now, it is ...

Read More →

The Gemini app is now the only way to access Google’s AI on iOS

GoogleGoogle announced Wednesday that it is removing its Gemini AI model from the Google app on iOS,...

Read More →Google Pixel 9 is getting a scam detection upgrade you’ll want on your phone

Over three months ago, Google started beta testing a new safety feature for Pixel phones that can se...

Read More →

Comments on "ChatGPT now interprets photos better than an art critic and an investigator combined" :